AI x Web3: Exploring the Emerging Industry Map and Future Potential

Written by: IOSG Ventures

Part One

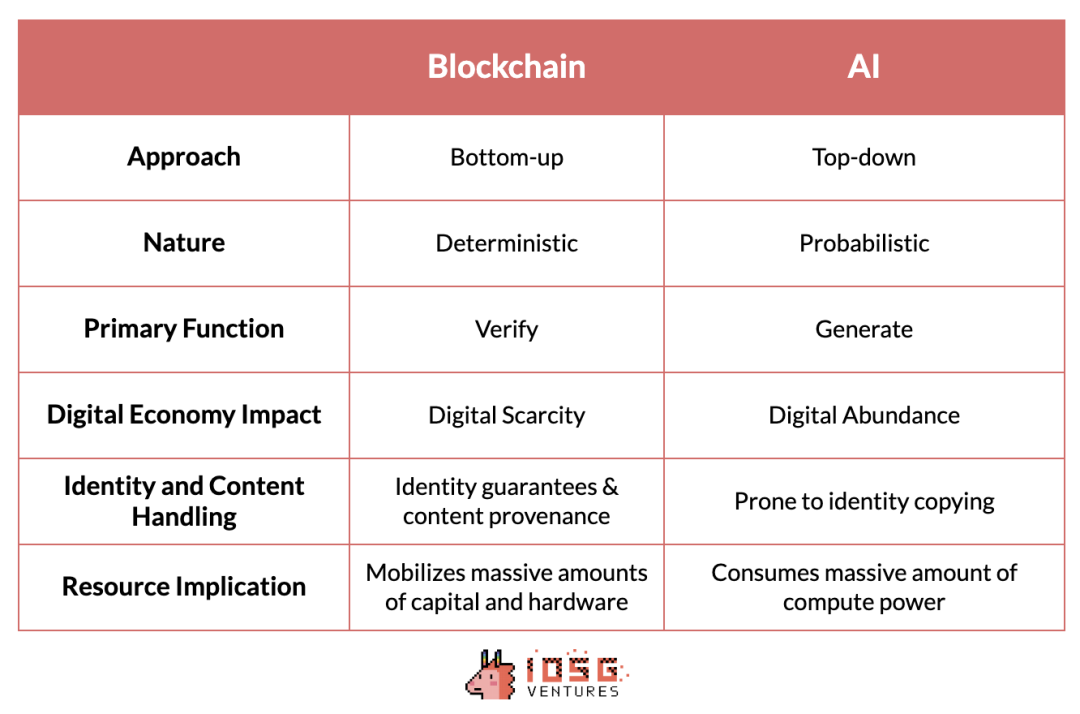

At first glance,AI x Web3 appear to be separate technologies, each based on fundamentally different principles and serving different functions. However, a deeper look reveals that the two technologies have the opportunity to balance each other's trade-offs, and each's unique strengths can complement and enhance each other. Balaji Srinivasan brilliantly articulated this concept of complementary capabilities at the SuperAI conference, inspiring a detailed comparison of how these technologies interact.

Tokens took a bottom-up approach, emerging from the decentralization efforts of anonymous cyberpunks, and evolving over more than a decade through the collaborative efforts of numerous independent entities around the world. In contrast, AI was developed through a top-down approach, dominated by a handful of tech giants. These companies set the pace and dynamics of the industry, and barriers to entry are determined more by resource intensity than technological complexity.

The two technologies also have completely different natures. In essence, Token is a deterministic system that produces unchangeable results, such as hashing.Xiaobai NavigationFunction orZero knowledge proofThis is in stark contrast to the probabilistic and often unpredictable nature of artificial intelligence.

Similarly, encryption technologyVerificationExcellent performance, ensuring the authenticity of transactions andSafetysex, and establishTrustlessprocesses and systems, while AI focuses ongenerate, creating rich digital content. However, in the process of creating digital richness, it is difficult to ensure the source of content and prevent identitystealUsing it becomes a challenge.

Fortunately, Tokens provide a digitally rich counterpoint:Digital scarcity。它提供了相对成熟的工具,可以推广到人工智能技术,以确保内容来源的可靠性并避免身份steal用问题。

A significant advantage of tokens is their ability to attract large amounts of hardware and capital into a coordinated network to serve a specific purpose. This ability is particularly beneficial for artificial intelligence, which consumes a lot of computing power. Mobilizing underutilized resources to provide cheaper computing power can significantly improve the efficiency of artificial intelligence.

By comparing these two technologies, we can not only appreciate their individual contributions, but also see how they work together to forge new paths in technology and economics. Each technology can complement the shortcomings of the other to create a more integrated and innovative future. In this blog post, we aim to explore the emerging AI x Web3 industry landscape, focusing on some emerging verticals at the intersection of these technologies.

Source: IOSG Ventures

Part Two

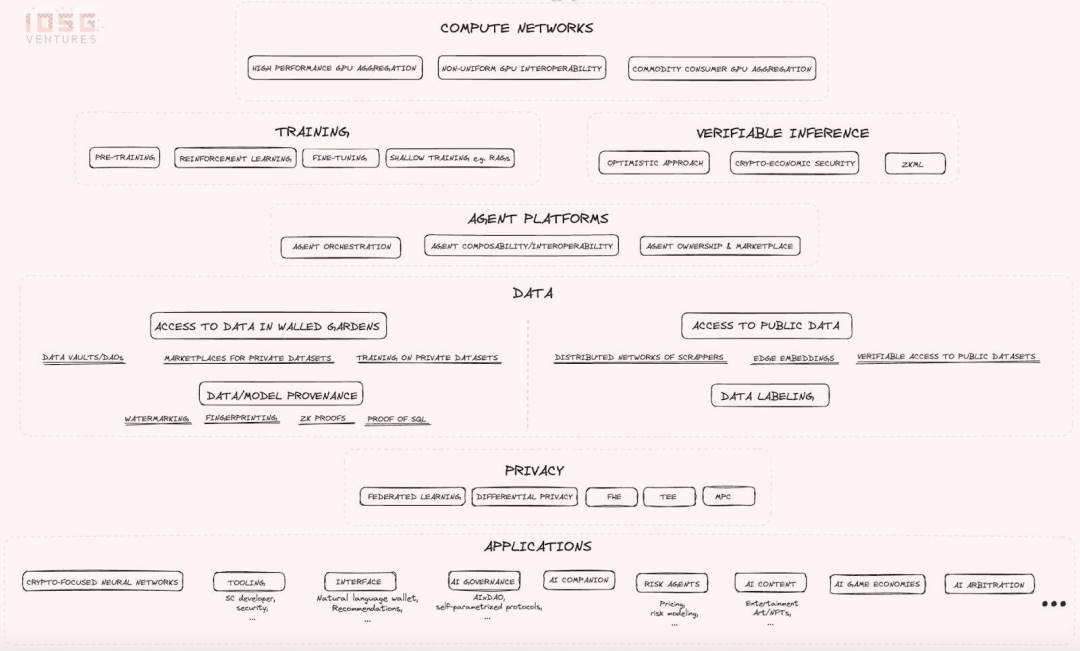

2.1 Computational Network

The industry map begins with an introduction to computing networks that attempt to address the limited GPU supply problem and try to reduce computing costs in different ways. The following are worth focusing on:

- Non-Uniform GPU Interoperability: This is a very ambitious attempt with high technical risks and uncertainties, but if successful, it will have the potential to create results of huge scale and impact, making all computing resources interchangeable. Essentially, the idea is to build compilers and other prerequisites so that any hardware resource can be plugged in on the supply side, and on the demand side, the non-uniformity of all hardware will be completely abstracted so that your computing request can be routed to any resource in the network. If this vision succeeds, it will reduce the current reliance on CUDA software that is completely dominated by AI developers. Despite the high technical risks, many experts are highly skeptical about the feasibility of this approach.

- High-performance GPU aggregation: Integrate the world's most popular GPUs into a distributed and permissionless network without worrying about interoperability issues between non-uniform GPU resources.

- Commodity consumer GPU aggregation: It aims to aggregate some lower-performance GPUs that may be available in consumer devices, which are the most underutilized resources on the supply side. It caters to those who are willing to sacrifice performance and speed for a cheaper and longer training process.

2.2 Training and Inference

Computational networks are used for two main functions: training and inference. The demand for these networks comes from Web 2.0 and Web 3.0 projects. In the Web 3.0 space, projects like Bittensor use computing resources for model fine-tuning. In terms of inference, Web 3.0 projects emphasize the verifiability of the process. This focus has given rise to verifiable inference as a market vertical, and projects are exploring how to integrate AI inference into intelligentcontractwhile maintaining the principle of decentralization.

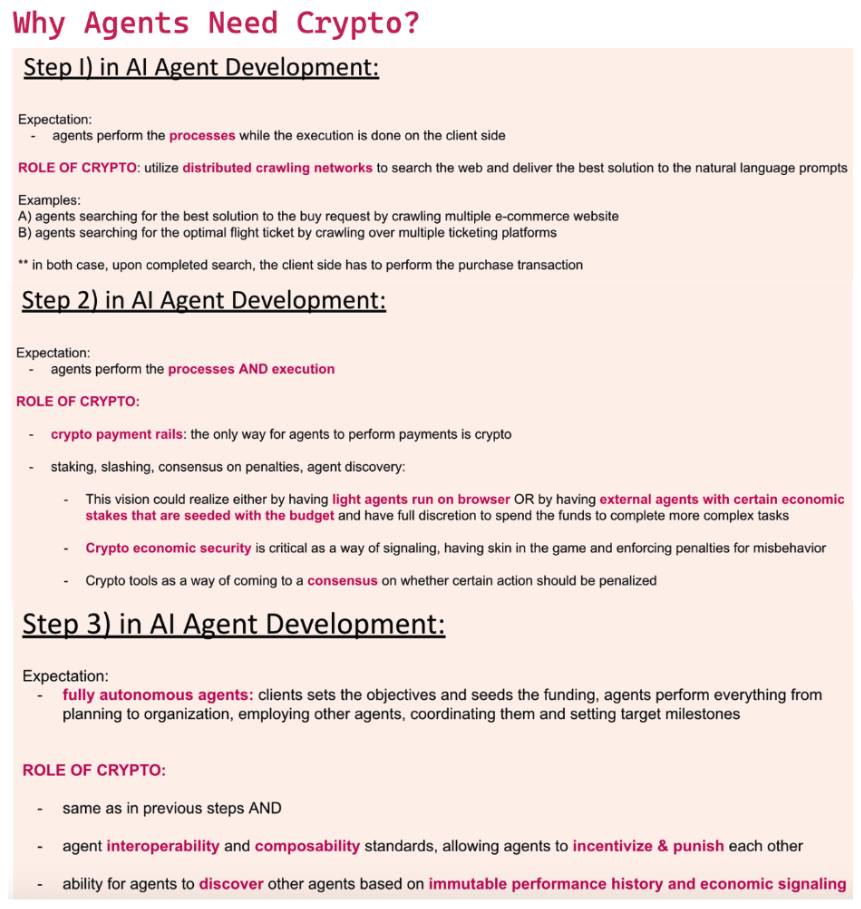

2.3 Intelligent Agent Platform

Next upIntelligent Agent Platform, the graph outlines the core problems that startups in this category need to solve:

- Agent interoperability and discovery and communication capabilities: Agents can discover and communicate with each other.

- Agent cluster construction and management capabilities: Agents can form clusters and manage other agents.

- Ownership and markets for AI agents: Providing ownership and markets for AI agents.

These features emphasize the importance of flexible and modular systems that can be seamlessly integrated into a variety ofBlockchainand AI applications. AI agents have the potential to revolutionize the way we interact with the Internet, and we believe that agents will leverage infrastructure to support their operations. We envision AI agents relying on infrastructure in the following ways:

- Access real-time web data using a distributed crawling network

- Using DeFi channels for inter-agent payments

- Requiring an economic deposit is not only to penalize misbehavior when it occurs, but also to improve the discoverability of the agent (i.e. using the deposit as an economic signal during the discovery process)

- useconsensusDecide which events should result in a reduction

- OpenInteroperabilityStandards and agent frameworks to support buildingCombinable Collective

- according toImmutable data historyTo evaluate past performance and select the right agent group in real time

Source: IOSG Ventures

2.4 Data Layer

In the convergence of AI x Web3, data is a core component. Data is a strategic asset in the AI competition and a key resource along with computing resources. However, this category is often overlooked because most of the industry's attention is focused on the computing level. In fact, primitives provide many interesting value directions in the data acquisition process, mainly including the following two high-level directions:

- accessPublic Internet Data

- accessProtected data

Accessing public Internet data: This direction aims to build distributed crawler networks that can crawl the entire Internet in a few days, obtain massive data sets, or access very specific Internet data in real time. However, to crawl large data sets on the Internet, the network requirements are very high, and at least a few hundred nodes are needed to start some meaningful work. Fortunately, Grass, a distributed crawler node network, already has more than 2 million nodes actively sharing Internet bandwidth to the network with the goal of crawling the entire Internet. This shows the great potential of economic incentives in attracting valuable resources.

While Grass provides a level playing field in terms of public data, there is still a challenge in leveraging the underlying data - namely, the access problem of proprietary datasets. Specifically, there is still a large amount of data that is kept in a privacy-preserving manner due to its sensitive nature. Many startups are leveraging some cryptographic tools that enable AI developers to build and fine-tune large language models using the underlying data structures of proprietary datasets while keeping sensitive information private.

Federated learning, differential privacy, trusted execution environment, fully homomorphic and multi-party computing, etc.Technologies offer varying levels of privacy protection and trade-offs.Bagel Research ArticlesThis article summarizes an excellent overview of these techniques, which not only protect data privacy during machine learning, but also enable comprehensive privacy-preserving AI solutions at the computational level.

2.5 Data and Model Sources

Data and model provenance techniques aim to establish processes that can assure users that they are interacting with the expected models and data. In addition, these techniques provide guarantees of authenticity and origin. Take watermarking technology as an example. Watermarking is one of the model provenance technologies. It embeds a signature directly into the machine learning algorithm, more specifically directly into the model weights, so that at retrieval time it can be verified that the inference comes from the expected model.

2.6 Application

In terms of applications, the design possibilities are endless. In the industry landscape above, we have listed some particularly exciting developments as AI technologies are applied in the Web 3.0 field. Since most of these use cases are self-descriptive, we will not comment on them here. However, it is worth noting that the intersection of AI and Web 3.0 has the potential to reshape many verticals in the field, as these new primitives provide developers with more freedom to create innovative use cases and optimize existing use cases.

Part Three

Summarize

The AI x Web3 convergence brings a vision full of innovation and potential. By leveraging the unique strengths of each technology, we can solve a variety of challenges and open up new technological paths. As we explore this emerging industry, the synergy between AI x Web3 can drive progress and reshape our future digital experiences and the way we interact on the web.

The convergence of digital scarcity and digital abundance, the mobilization of underutilized resources to achieve computational efficiency, andSafetyThe establishment of data practices that protect privacy will define the era of the next generation of technological evolution.

However, we must recognize that the industry is still in its infancy and the current landscape may become obsolete in a short period of time. The rapid pace of innovation means that today's cutting-edge solutions may soon be replaced by new breakthroughs. Nevertheless, the foundational concepts explored - such as computing networks, proxy platforms, and data protocols - highlight the huge possibilities of the convergence of AI and Web 3.0.

The article comes from the Internet:AI x Web3: Exploring the Emerging Industry Map and Future Potential

相关推荐: 何一社区交流全文:谈高FDV项目上币的矛盾、下架僵尸币的标准等

近日,币安注册用户突破2亿,并为此举办AMA,币安联合创始人何一回答了对敲盗币事件、FDV大项目上币的矛盾、下架僵尸币的标准等问题。 编译:吴说Blockchain Recently, Binance has exceeded 200 million registered users and held an AMA. Binance co-founder He Yi answered some questions that everyone is concerned about, including the recent occurrence of theft by knocking...